A lot of us have been waiting for OWASP to publish a new set of top 10. Now that APIs are key in every solution, OWASP published the Top 10 Most Critical API Security Risks! It is worth the time to read it through. You don’t want to be in the news for the wrong reasons. #infosecurity #applicationsecurity

Category: Architecture

Java – Spring Security Framework and Azure AD

Yesterday I was wondering if Microsoft support middleware packages for Java to allow the typical resource provider actions in an access_token or id_tokens, similarly to what the OWIN NuGet packages do or the PassportJS libraries for NodeJS. These last two libraries act as middlewares intercepting the HTTP requests. They allow to, programmatically, parse the Authorization headers to extract Bearer tokens, validate the tokens, extract claims from the tokens etc, etc.

The libraries I had found so far, and that I was familiar with, were the MSAL set of libaries and the ADAL set of libraries. These are client side libraries, meant for applications acting or APIs acting as OAuth2 Clients (not as Resource Providers)

Microsoft does not maintain a Java middleware library similar to OWIN or Passport. The development team is using Spring and will use Azure Active Directory as the Identity Provider, they could use Spring Boot Starter for AAD: https://github.com/microsoft/azure-spring-boot/tree/master/azure-spring-boot-starters/azure-active-directory-spring-boot-starter

Spring Boot is a wrapper of Spring Framework libraries packaged with preconfigured components.

They can also work with AAD and Spring Security, but there aren’t many articles out there related to that framework and how to use it when AAD is the IdP providing JWT tokens except for this one:

Article and related links: https://azure.microsoft.com/en-us/blog/spring-security-azure-ad/

There is also this old blog post with some sample code(using spring framework security, however the example is for illustration purposes only, and uses an access_token issued for a SPA client application in order to request access to an API, which is not exaclty the case of the application we’re trying to modernize (multi-page JSP web application)

https://dev.to/azure/using-spring-security-with-azure-active-directory-mga

Choosing an OAuth2.0 grant flow for your solution.

A colleague of mine that also specializes on Identity Management (IdM) with Identity Providers (IdP) such as AAD, Okta, ADFS, Ping Federate and IdentityServer, published this summary on the 6 different flows encompassed by the OAuth2.0 RFCs.

If you work with the OAuth 2.0 Authorization Framework and the OIDC Authentication Framework, you’ll find the article below very interesting.

One thing to remember when you study the Authorization Grants or Flows in OAuth2.0 is that neither the Extension Grant flow nor the Client Credentials flow require the end user to interact with a browser.

The Client Credentials Flow is designed for headless daemons/services where the is no human interaction. The Extension Grant Flow is sometimes named as OBO (on-Behalf-of) and can be used to exchange SAML2.0 tokens for OAuth2.0 access tokens/bearer tokens. This grant (Extension Grant) doesn’t necessarily require user interaction during the token exchange at the IdP for an authorized application if the token being exchanged is still valid. (OAuth2.0 Extension Grant) In this case the SAML2.0 bearer token with its assertions is exchanged for an access token/bearer token/JWT that represents the client application or service.

To Microservice or not to Microservice – helpful links and resources

Microservices is an approach to application development in which a large application is built as a suite of modular services. Each module supports a specific business goal and uses a simple, well-defined interface to communicate with other modules.

Microservices introduction:

Introduction to Microservices Whitepaper

The initial white paper on microservices by Martin Fowler and James Lewis (it is a bit dense, but it highlights the intent of this architecture and it is one of the first whitepapers published on the subject):

Using API gateway to get coarser calls and group microservices:

Foreign Key relationship conundrum:

Foreign Keys and Microservices

Distributed transactions and microservices, how to keep referential integrity:

Distributed Transactions Strategy in Microservices

Netflix whitepaper:

Netflix Architectural Best Practices

IASA Free Resources/Whitepapers:

Successfully implementing a MSA

And finally, if you have a pluralsight trial subscription and you prefer to watch a video instead of reading a bunch of articles, this course is a good introduction to the pattern, the MSDN Professional subscriptions should include a few courses trial at Pluralsight for free:

Happy design and coding!

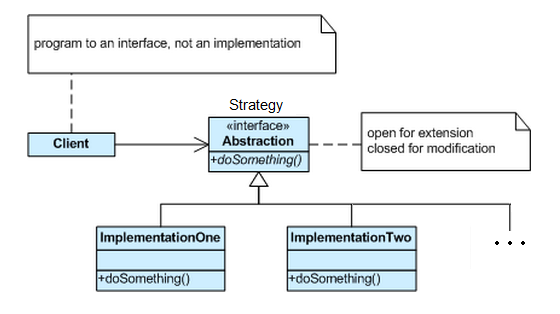

I need to get rid of that switch statement. What is the Strategy Pattern?

I’m a big advocate of software maintainability and there is nothing better for that than applying well known patterns to improve the existing code. Each time I see long if..then..else constructs, or switch statements to drive logic, I think of how much better the code would be if we allow encapsulation and use one of my favorite behavioral pattern… => the Strategy Pattern.

A Strategy is a plan of action designed to achieve a specific goal

A Strategy is a plan of action designed to achieve a specific goal

This is what this pattern will do for you: “Define a family of algorithms, encapsulate each one, and make them interchangeable. Strategy lets the algorithm vary independently from clients that use it.” (Gang of Four);

Specifies a set of classes, each representing a potential behaviour. Switching between those classes changes the application behavior. (the Strategy). This behavior can be selected at runtime (using polymorphism) or design time. It captures the abstraction in an interface, bury implementation details in derived classes.

When we have a set of similar algorithms and its need to switch between them in different parts of the application. With Strategy Pattern is possible to avoid ifs and ease maintenance;

Now, how can we digest that in code, now that you got the gist of the problem and want a better solution than your case statements.

This example I’ll be showing is a pure academic exercise:

The problem to solve is given a string as an input, create a parsing algorithm(s) that given a text stream identifies if the text complies with the following patterns. Angle brackets should have an opening and closing bracket and curly brackets should also have an opening and closing bracket, no matter how many characters are in the middle. These algorithms must be tested for performance.

<<>>{} True

<<{>>} False

<<<> False

<<erertjgrh>>{sgsdgf} True

Continue reading I need to get rid of that switch statement. What is the Strategy Pattern?

Technical Debt – What it is and what to do about it

The following paragraphs in italic are taken from an article in IASA (International Association of Software Architects) written by Gene Hughson

In spite of all that’s been written on the subject of technical debt, it’s still a common occurrence to see it defined as simply “bad code”. Likewise, it’s still common to see the solution offered being “stop writing bad code”. It’s not that simple.

What is Technical Debt?

A design or construction approach that’s expedient in the short term but that creates a technical context in which the same work will cost more to do later than it would cost to do now (including increased cost over time) .

Technical debt may not only incur costs due to rework of the original item, but also by making more difficult changes that are dependent on the original item.

Deferring bug fixes is a form of technical debt as is deferring automation of recurring support tasks.

Dependencies can be a source of technical debt, both in regard to debt they carry and in terms of their fitness to your purpose.

The platform that hosts your application is yet another potential source of technical debt if not maintained.

As noted above, the “interest” on technical debt can manifest as the need for rework and/or more effort in implementing changes over time. This increase in effort can come through the proliferation of code to compensate for the effects of unresolved debt or even just through increased time to comprehend the existing code base prior to attempting a change.

In short, technical debt is any technical deficit that involves a risk of greater cost to expand the application and/or end user dissatisfaction.

What to do about it?

Becoming aware of existing debt is a critical first step, but is insufficient in itself. Taking steps to actively manage the system’s debt portfolio is essential. The first step should be to stop unconsciously taking on new debt. Latent debt tends to fit into the immediate, unexpected payback model mentioned above. Likewise, steps taken to improve the quality up front (unit testing, code review, static analysis, process changes, etc.) should reduce the effort needed for detection and remediation. Architectural and design practices should also be examined. Too little design can be as counter-productive as too much.

Just as the taking on of new debt should be done in a rational manner, so should be the retirement of old debt.

Think of technical debt as credit card debt and you’ll realize why it is important to account for it, be aware of it and pay it off before funding any new features.

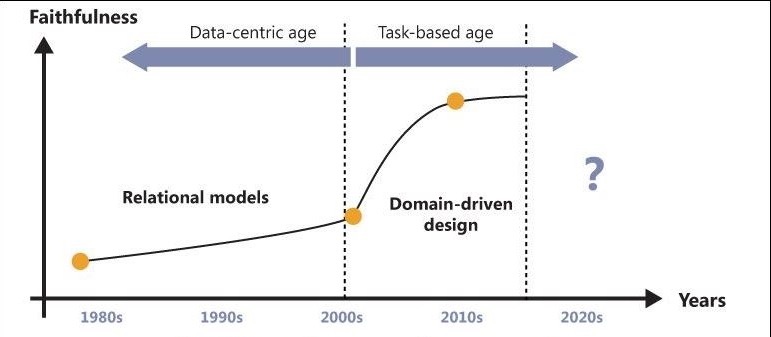

Domain Modeling, why it is replacing Data Modeling and bottom up designs

Complexity is the driving force for the adoption of the Domain Model pattern. Complexity should be measured in terms of the current requirements and what processes are modeled or automated by the application.

A domain model is a collection of plain old classes, each of which faithfully represents a significant entity in the business domain. These classes are data containers and can expose public properties, but they also are expected to expose methods. The term POCO (Plain Old CLR Object) is often used to refer to classes in a domain model. At some point, the classes in the domain model needs to be persisted. Persistence is not a responsibility of the domain model, and it will happen outside of the domain model through rep ositories connected to the infrastructure layer.

ositories connected to the infrastructure layer.

The conversion between the model and relational store is typically performed by ad hoc tools— specifically, Object/ Relational Mapper (O/ RM) tools, such as Microsoft’s Entity Framework or NHibernate. The unavoidable mismatch between the domain model and the relational model is the critical point of implementing a Domain Model pattern.

For decades (1980 to 1990s), relational data modeling was the most effective way to model the business layer of software applications. In the .NET space, the turning point came in the early 2000s, when more and more companies that still had their core business logic carved in the rough stone of mainframes took advantage of the Microsoft .NET Framework and Internet breakthroughs to renovate and modernize their systems. In only a few years, this poured an incredible amount of complexity on the shoulders of developers. RAD (Rapid Application Development) and relational modeling then— we’d say almost naturally— appeared worn out and their limits were exposed.

All the text above was taken from “Esposito, Dino; Saltarello, Andrea (2014-08-28). Microsoft .NET – Architecting Applications for the Enterprise (2nd Edition) (Developer Reference)” with permission from the authors.

Complexity is tackled best using classes that model the business with ubiquitous language. Language that is understood by the technical modelers (software architects/developers) and by the business counterparts or system matter experts. Domain Services encapsulate services that can be consumed by other applications or different GUIs. The focus now is in modeling the domain and modeling the behavior, rather than modeling the data (RDBM) model without events or behavior. The data model, as a starting point in the design, falls short without modeling the sequence of events (behavior) and the flow of information.

Selling my book collection at Amazon…

I recently moved to a smaller place and can’t keep all my technical book collection.

I’m selling the books that I already read, no longer need or have duplicates. Some of them are for old .NET frameworks, but if you are maintaining legacy applications with these framework versions, the books from Microsoft Press can be helpful.

There are also books about SOA and SOAP, SQL Server specific books etc. The books are selling pretty fast.

You can access my seller’s collection at Amazon here.

All the prices are set for shipping within the USA only.

Happy coding!

Never underestimate the importance of training your IT personnel

The Eight Fallacies of Distributed Computing

Almost everyone, when they first start building a distributed application, make the following eight assumptions. All are proven to be false in the long run and cause trouble and painful learning experiences:

- The network is reliable

- Latency is zero

- Bandwidth is infinite

- The network is secure

- Topology doesn’t change

- There is one administrator

- Transport cost is zero

- The network is homogeneous

Keep the points above in mind when you design a distributed application. They are fallacies that increase the maintenance costs of your distributed application.